2017年、ニューヨークを拠点とするアイスティー会社が「ブロックチェーンに軸足を移す」と発表し、株価が急上昇した。 200%.

この話を持ち出したのは、6年後のことだ、 AI(人工知能) は として ぶーぶー ブロックチェーンがそうであったように。マーケティング部門は、「AI」が何を意味するのか、そしてより重要なのは、AIがどのようにサイバーセキュリティを強化することができるのかが、雑音に紛れてしまうほど、この言葉を使い古した。

それはクッピンガー・コールでも明らかだった。 欧州アイデンティティ&クラウド会議2023, そこで私は、アカウント乗っ取りを防止するAIの可能性について発表した。AIには誇大広告以上のものがあるが、より強力なサイバーセキュリティを構築し、組織をゼロトラストに近づけるというAIの本当の価値は、すべての話題にかき消されてしまう可能性がある。

それでは、用語を定義し、雑学に入ろう。サイバーセキュリティが認証、資格、利用データを処理するためにAIを実際に利用できる方法をいくつか検討しよう。そして、組織のサイバーセキュリティ・スタンスを向上させるために最も効果的なAIのタイプを定義しよう。

サイバーセキュリティは、アクセスの安全を確保するための多要素認証(MFA)、最小権限を強制するためのIDガバナンスと管理(IGA)、使用状況を監視するためのセキュリティインシデントとイベント管理(SIEM)など、コアコンピテンシーに細分化される傾向がある。

これらのコンピテンシーはそれぞれ高度に専門化され、独自のツールを導入し、異なるリスクから身を守っている。それらに共通するのは、いずれも山のようなデータを生み出すということだ。

IDを見てみよう。これまで以上に多くのユーザー、デバイス、エンタイトルメント、環境が追加されている。アイデンティティは 2021 年の調査によれば、人間が追いつくことのできる速度をはるかに上回って拡大しています。回答者の80%以上が、管理するアイデンティティ数が2倍以上増加したと述べ、25%が10倍の増加を報告しました。

アイデンティティの問題は、急速にデータの問題になりつつある。そして、だからこそAIは重要な資産となり得るのだ。質問を正しく組み立て、AIに何を尋ねるべきかを知っていれば、AIは大量のデータを素早く理解することができる。

サイバーセキュリティの専門家がリスクを防ぎ、脅威を検知するために使える3つの質問を紹介しよう:

#1。認証:AIを使って、誰が入ろうとしているのかを理解する。

AIは認証データを処理して、誰がシステムに認証しようとしているかを評価することができます。これは、使用しているデバイス、アクセスしようとしている時間、アクセスしている場所など、すべてのユーザーのコンテキストを調べることによって行われます。

次のステップは、その現在の情報を使って、その特定のユーザーの過去の行動と比較することである。もし私が先週と同じラップトップから、同じ時間に、同じIPアドレスから今週も認証すれば、おそらく私のコンテキストはかなり良く見えるだろう。

あるいは、私を名乗る人物が午前3時に新しいデバイスから、新しいIPアドレスでログインしようとしているなら、AIはステップアップ認証を自動化し、その不審な行動に異議を唱えるべきだ。

重要なのは、何が「良いコンテキスト」で何が「悪いコンテキスト」であるかという判断は、固定的なものではないということだ。その代わり、AIはユーザーや組織の全体的な行動を反映し、「普通」がどのように見えるかに適応するために、その判断を常に再評価する必要がある。ユーザーの行動は常に変化し、AIは常にそれを考慮する必要がある。

静的なルールセットでは、個々のコンテキストを考慮するのに十分なきめ細かさがなく、確信を持って行動を自動化するのに必要な参照データの深さが不足している。

別の言い方をすれば、静的なルールセットでは、「私」が「私」特有の「8」にサインインしようとするのが正常かどうかを知ることはできない。th ドイツ時間23:00から60分以内。私にとっては普通の動作かもしれないし、怪しいのかもしれない。いずれにせよ、静的なルールセットではわからない。

ほとんど 20年, RSAは、機械学習アルゴリズムと行動分析学を使って、顧客が「良い」コンテキストと「悪い」コンテキストを定義し、ユーザーの行動に対する対応を自動化できるよう支援してきました。 RSAリスクAI は、ユーザーが生成する膨大な量のデータを処理することで、従来の認証およびアクセス技術を補完し、企業がよりスマートで迅速、かつ安全なアクセス決定を大規模に行えるよう支援します。

#2 アカウントとエンタイトルメント:AIを使用して、誰かがアクセスできる内容を学習する

AIが認証データを処理して見つけ出す 誰 がアクセスしようとしている。アカウントと認証データをレビューし、別の質問に答える。 可能性がある 誰かがアクセスしたのか?

ボットは、様々なアプリケーションのアカウントとエンタイトルメントを調べることで、これに答える。これを行うことで、組織は最小権限(ゼロ信頼の重要な構成要素)に移行することができる。また、職務分掌違反の特定にも役立ちます。

人間にとって、アカウントと資格情報を処理することは不可能に近い。そのようなデータを手作業でレビューすることは、失敗するに違いない。人間のレビュー担当者は、おそらく「すべて承認」を押して、それで終わりだろう。

しかし、徹底的な権限見直しは労力がかかる反面、特にサイバーセキュリティを強化する上では大きな価値がある。人間はすぐに「すべて承認」ボタンを押してしまうため、私たちは必要以上に多くの権限を持つアカウントを作成している。 2% エンタイトルメントが使用される。

こうしたエンタイトルメントリスクは、組織がより多くのクラウド環境を統合するにつれて拡大する: ガートナー は、「アイデンティティ、アクセス、特権の不適切な管理が、現代のクラウドセキュリティの失敗の75%を引き起こす」と予測しており、企業の半数は誤って一部のリソースを直接一般に公開する可能性があると述べています。

組織は、資格データを検索することで、外れ値ユーザーのような貴重な洞察を見つけることができる。異常値ユーザーは、他のユーザーとよく似ているが、異なる資格の組み合わせを持っている。これらの違いは職務分掌違反ほど明白ではないかもしれないが、それでもAIが認識するには十分重要かもしれない。アクセス・レビューでは、そのような異常値ユーザに焦点を当てることになり、他の99%のユーザは低リスクとみなされる権限や、以前に同じ権限を承認されたことがあるユーザではない。

そびえ立つ干し草の山から小さな針を見つけるのは、AIにとっては簡単だが、人間には不可能だ。

#3。アプリケーションの使い方AIを使用して、誰かが実際に何をしているかを知る

AIは認証データを見て判断する 誰 がアクセスを得ようとしている。それは、その人が何をしようとしているのかを理解するために、エンタイトルメント・データを見るのである。 可能性がある にアクセスする。

アプリケーションの使用データに関して言えば、AIは誰かが実際に何を使っているのかに答えようとしている。 する.

AIは、私がこのブログ記事を書くために実際に使ったリソース、助けを求めた相手、参考にしたデータ、使用したアプリなどを見ることができる。AIは、私がこのブログ記事を書くために実際に使用したリソース、ヘルプを求めた人、参考にしたデータ、使用したアプリなどを知ることができる。

重要なことは、この分析によって、不正、違法、コンプライアンス違反、またはリスクのある行為を明らかにすることもできるということだ。 べきである.AIはアプリケーションの使用状況データを処理して、そのようなミスを見つけ、対処することができる。確かに、あなたは機密性の高いSharePointサイトの権限を持っていますが、なぜ直近30分間にそこから大量のファイルをダウンロードしているのでしょうか?

AIには大きく分けて、決定論的AIと非決定論的AIの2種類がある。機械学習はほとんどが決定論的AIだ。構造化されたデータの処理が得意だ。ディープラーニングは多くの場合、非決定論的AIの一種で、非構造化データの処理が得意だ。

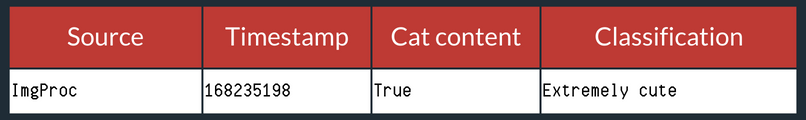

別の言い方をすればディープラーニングはこの画像を見て、"猫はどこに写っているか?"と答えることができるだろう。

機械学習はログファイルを見て、"タイムスタンプ168235198で何が起きたか?"と答えることができるだろう。

セキュリティの分野では、認証、エンタイトルメント、アプリケーションの使用データの評価にAIを使おうとしても、私たちが見ている情報はほとんど構造化されたデータだけです。

つまり、サイバーセキュリティでは機械学習、つまり決定論的AIを使いたいということだ。決定論的AIは、非決定論的AIよりも透明性が高い。「より透明に」というのは、正直に言うと、私たち全員(私も含めて)が、すべての詳細を知るための高度な数学知識を持っているわけではないからだ。しかし、決定論的MLモデルは、多くの人々にその全体像を説明することができる。

ある。 リサーチ 非決定論的AIがどのように機能するかをより深く理解するために起こっている。ニューラルネットワークや他の非決定論的AIが実際にどのように機能するのかを完全に理解することになるのか、この先が気になるところだ。

構造化された入力を扱うのと同じくらい重要なのは、決定論的AIが生み出す出力だ。非決定論的AIは、よりブラックボックスに近い。例えば、ある画像を非決定論的AIがどのように生成したのか、私たち人間は正確に知ることができない。最初に入力があり、最後に出力があり、そのブラックボックスの真ん中を開ければ、ドラゴンとユニコーンのファンタジーランドが見える。

決定論的AIでは、モデルがどのようにしてその答えにたどり着いたかを知ることができる。理論的には、決定論的AIの仕事をチェックし、同じ答えを導き出すために同じ入力を手動で差し込むことができる。ただ、それを手作業で行うには、膨大な量のメモ用紙とコーヒーと正気が必要で、リアルタイムで行うことはできない。

セキュリティチームや監査チームにとって、透明性を確保し、AIがどのように機能しているかを理解することは、コンプライアンスを維持し、認証を申請するために不可欠である。

サイバーセキュリティに非決定論的/深層学習的アルゴリズムの役割がないとは言わない。ディープラーニングは、私たちが探し求めていなかった答えを見つけるかもしれないし、決定論的なモデルを改善するために使うこともできる。サイバーセキュリティの専門家は、そのブラックボックスとそれが生み出すものにかなりの信頼を置く必要があるだろう。

私たちはAIに大きく賭けており、より強固なサイバーセキュリティを構築する上でAIが重要な役割を果たすと考えている。

認証、アクセス、ガバナンス、ライフサイクルが連携してIDライフサイクル全体を保護する必要がある。

これらの関数を組み合わせて 統合IDプラットフォーム また、AIをより賢く訓練するために、より多くのデータインプットを作成することもできる。